The Step-By-Step Guide to Creating Your First Email A/B Test

Puru Choudhary

January 20, 2015

One attitude that can bring down profits is “if it ain’t broke, don’t fix it.” While it’s true that something that is broken should be attended to, it’s also true that you can make things work better with testing and optimization. If you could improve your email click-through rates by 22% with a few tests, you’d be silly not to, right? Lucky for you, most email marketing tools now offer A/B testing features. You can create two versions of an email, see which performs better, and use that data to improve your email marketing efforts. The end result is a more engaged email list and a healthier bottom line.

Ready to get started? Here are three places to kick off your testing:

Sending day/time

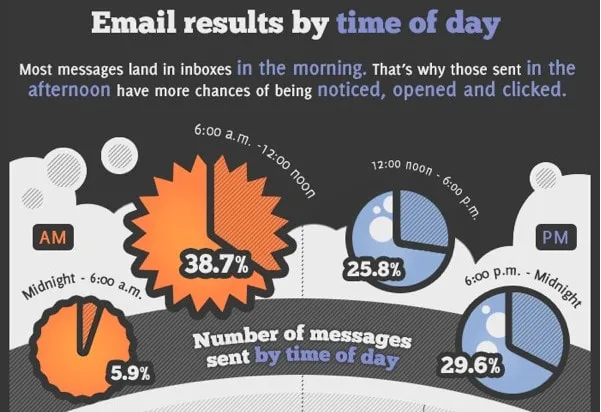

A snippet from the GetResponse email send times infographic.

There’s a lot of data out there on the “best” send time, with plenty of conflicting data. MailChimp found that subscribers generally engaged more on weekdays. The ideal time was found to be around 10 AM (in the subscriber’s time zone)…but not by a strong majority. That was the overall ideal time, but it was still optimal for only 7% of subscribers (which means that it wasn’t the ideal time for the majority of subscribers).

One set of data, put together by GetResponse, found that morning and early afternoon emails got the best results. Other business owners, quoted at Copyblogger, found their optimized send time and day varied wildly based on their audience and whether their product was B2B or B2C. Counterintuitively, many business owners found that emails sent on nights and weekends got better results. Either way, the MailChimp data found that users who tested their send times to optimize them increased open rates by an average of 9.3% and click-through rates of 22.6%—not too shabby.

When you’re optimizing your email send time, it’s best to start out with a wide discrepancy (morning vs. evening) rather than a smaller one (8 AM vs. 9 AM). That way, you’re likely to get a better feel for the general best time for your audience, and then you can hone in on the specific best time. Make sure you’re not just looking at open rates, but click-through rates and conversions to purchases, too. It’s also worth noting that sending in the recipient’s local time zone makes a big difference—if that’s an option within your email program, definitely use it.

Subject line

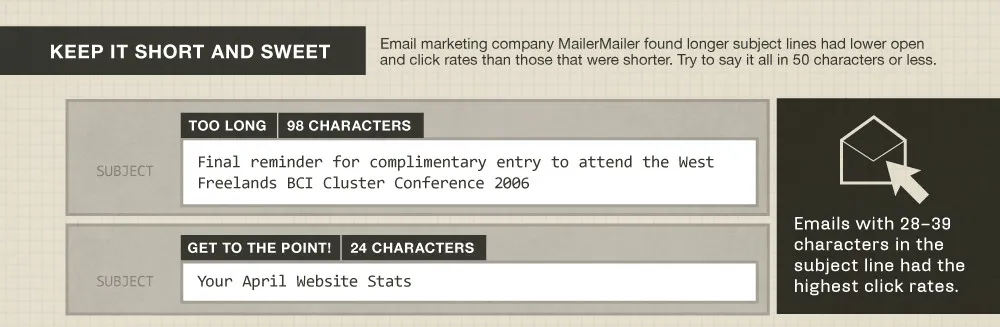

A snippet of the Litmus subject lines infographic.

If there’s any part of email marketing that’s been written about the most, it’s the subject line. With lists upon lists upon lists of subject line best practices being everywhere you look, you’ll have plenty of inspiration for testing.

Here are a few places to start when you’re testing a subject line:

- Do the opposite of whatever you normally do. This is the same school of thought as the drastic differences in send times—it might tank, or it might do better than average. Either way, you’ll get ideas for where to go from there.

- Try adding your brand or business name at the beginning of the subject line (i.e. instead of “Sale starts today!,” try using “[Bob’s Tool Shed] Sale starts today!”).

- Try a more vague subject line that’s meant to pique the reader’s curiosity. See how it performs compared to a straightforward subject line that tells the reader exactly what’s in the email.

- Add customization into the subject line, like the name of the subscriber or their location.

- Make sure to keep your subject lines short (28-39 characters). This has always been considered a best practice, but it’s even more important now, with VentureBeat reporting that 65% of emails are being opened on a mobile device first. Smaller screens mean less room for subject lines, so keep ‘em short and snappy.

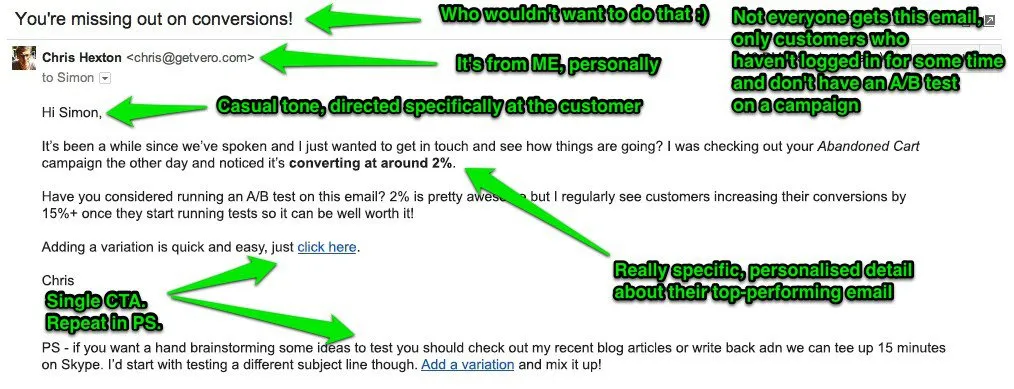

The “PS” note or call to action

There’s a lot going on in this email breakdown (read the full post here), but note that there’s a CTA repeated twice, once in a PS note.

Every email should have a strong, clear call to action (CTA). After all, you’re trying to get the reader to do something after they open the email (aren’t you?). An often recommended tactic for this is adding a CTA within a “PS” note at the end of the email. That might or might not work depending on your email marketing style, but it’s worth trying.

When it comes to your call to action, there are a few places you can get started:

- Try creating a low-pressure, low-commitment call to action. MarketingExperiments found that lower-pressure CTAs (“View details or “Learn more”) outperformed higher-pressure CTAs that involved work on the reader’s end, like “Shop now,” or “Find your solution.” Of course, you’ll want to compare it to a more promotional CTA—because for your readers, that might perform better.

- In the same vein, try a few variations on the phrasing. You might not think that “Click to continue” would make a huge difference over “Continue to article” or “Read more.” But when MarketingSherpa tested those phrases, they found it did make a difference of 8.53% in click-through rate. Not too shabby!

- A button to click, vs. a text link. You’d think a button would automatically increase links, but when Aweber tested it, they found there was an initial increase in links and then after a few emails, the click-through rate was worse than with text links.

- If you do a text link (and especially with the “PS” note at the end of the email), you can also try out bolded or italicized text vs. normal text.

- If you use a button, test different colors. This isn’t always the silver bullet people think it might be, but sometimes there is a clear increase in click-through rates and conversion rates.

Your email tool can tell you which version of the CTA received the most click-throughs, but if you want extra data on what those visitors did after clicking through to your site, consider using different links for the call to actions, with UTM parameters. (If you need a tool to help you manage that process, we’re here for you.)

For example, if you want to test a CTA of “Buy Now” against “Learn More”:

For “Buy Now”, the underlying URL could look something like this:

www.example.com/?utm_campaign=holiday_sale&utm_medium=email&utm_source=newsletter&utm_content=buy_nowAnd for “Learn More”, the URL might look like,

www.example.com/?utm_campaign=holiday_sale&utm_medium=email&utm_source=newsletter&utm_content=learn_moreNow, you can see what CTA leads to more conversions in Google Analytics, rather than just knowing the click-through rate of the different calls to action.

You might notice we didn’t touch on testing the body text. You can, of course, do that, but there’s such a wide variety of ways to go when experimenting with your email’s content that it’s hard to summarize. You can:

- Test a long vs. short email

- Test an email that has the same content, but formatted differently (bulleted lists, split up under headers)

- Test a “personal letter” style of email vs. a newsletter-style email

- Test having lots of graphics vs. having no graphics

You get the idea. And I would recommend trying some of these changes out—once you’ve tested the other elements we’ve covered (because those are typically the areas where you can see a drastic result fairly quickly).

Ready to get started? Choose one of the above areas to work on with your next email and test away—you might be surprised at the results!

- 3 Users

- 5 Projects

- 2 Custom Domains

- Simple Taxonomy

- UTM Rules

- Presets

- Labels

- Notes

- Custom Parameters

- Multi-tag UTM Builder

- Auto-shortening

- Click Reports

- Fine-grained User Permissions

- Auditing Tools

- Chrome Extension

- Custom Domain SSL

- URL Monitoring

- Redirect Codes / Link Retargeting

- Bulk Operations

- 5 Users

- 10 Projects

- 3 Custom Domains

- All Taxonomy Types

- Bulk URL Cloning

- QR Codes

- Conventions

- Grid Mode URL Builder

- Email Builder

- Auto-generated Tracking IDs

- Automated Exports

- API Access

- Custom Users

- Custom Projects

- Custom Domains

- Single Sign-On (SSO)

- Invoice Billing

- Signed Agreement

- SOC 2 Type 2